KVM¶

Overview¶

Morpheus KVM is a powerful, cheaper alternative to virtualization compared with other hypervisor offerings. It is also very capable of setting up complex shared storage and multiple networks across many hosts. This guide goes over the process for onboarding brownfield KVM clusters and for provisioning new KVM clusters directly from Morpheus. When created or onboarded, KVM clusters are associated with the chosen Cloud and can then be selected as provisioning targets using existing Instance types and automation routines. In this example, baremetal KVM hosts are added to a Morpheus-type Cloud but similar combinations can be made with other Cloud types.

Requirements¶

At this time, Morpheus primarily supports CentOS 7 and Ubuntu-based KVM clusters. When creating KVM clusters directly in Morpheus these are the two base OS options. It’s possible to onboard KVM clusters built on other Linux distributions, such as SUSE Linux but the user would need to ensure the correct packages were installed. The required packages are listed below.

kvm

libvirt

virt-manager

virt-install

qemu-kvm-rhev

genisoimage

Additionally, Morpheus will attempt to add a new network switch called ‘morpheus’ and storage pool when onboarding a brownfield KVM cluster.

When creating new clusters from Morpheus, users simply provide a basic Ubuntu or CentOS box. Morpheus takes care of installing the packages listed above as well as Morpheus Agent, if desired. The same can be said for adding a new host to an existing KVM cluster. Users need only provide access to an Ubuntu or CentOS box and Morpheus will install the required packages along with making sensible default configurations. Users can also add existing KVM hosts to a cluster. After providing SSH access into the host, if Morpheus detects that virsh is installed, it will treat it as a brownfield KVM host. Brownfield KVM hosts must have:

libvirt and virsh installed

A pool called morpheus-images defined as an image cache and ideally separate from the main datastore

A pool called morpheus-cloud-init defined which stores small disk images for bootup (this pool can be small)

Note

Morpheus creates (or uses in the case of brownfield hosts) a morpheus-images pool which is separate from the main datastore. This is a host-local image cache which facilitates faster clone operations. The cache will automatically purge images once the allocation reaches 80% to avoid filling completely. Once it is 80% full, the oldest accessed volumes in the cache will be deleted first until the cache is under 50% full once again.

Creating the Cloud¶

Morpheus doesn’t include a KVM-specific Cloud type. Instead, other Cloud types (either pre-existing or newly created) are used and KVM clusters are associated with the Cloud when they are onboarded or created by Morpheus. For example, a generic Morpheus-type Cloud could be created to associate with baremetal KVM clusters. Similarly, brownfield VMware KVM hosts could be onboarded into an existing VMware vCenter Cloud. Other combinations are possible as well. In the example in this section, a Morpheus Cloud will be created and KVM hosts will be associated with it to become Morpheus provisioning targets.

A Morpheus-type cloud is a generic Cloud construct that isn’t designed to hook into a specific public or private cloud (such as VMware or Amazon AWS). Before onboarding an existing KVM host or creating one via Morpheus UI tools, create the Cloud:

Navigate to Infrastructure > Clouds

Click + ADD

Select the Morpheus Cloud type and click NEXT

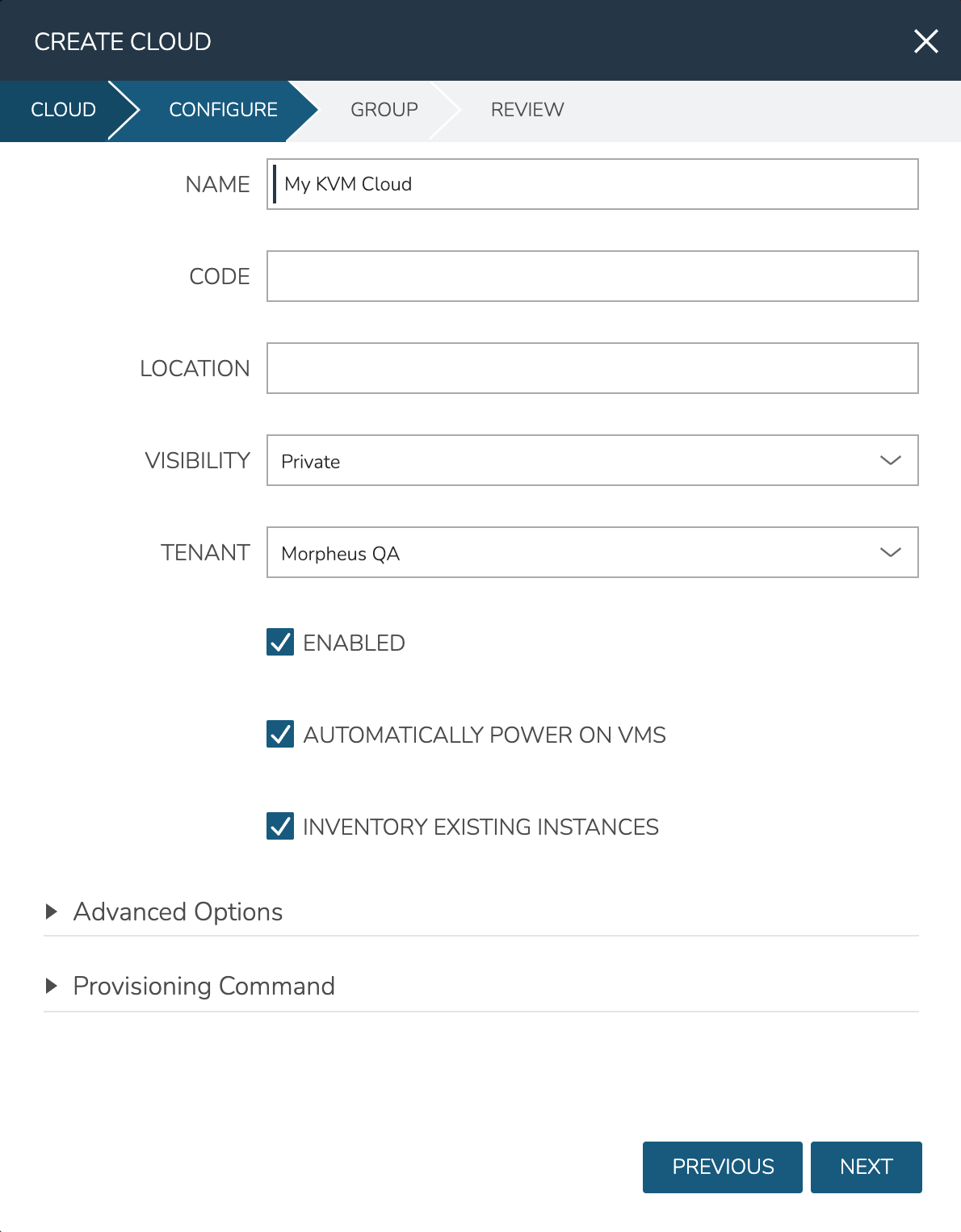

On the Configure tab, provide:

NAME: A name for the new Cloud

VISIBILITY: Clouds with Public visibility will be accessible in other Tenants (if your appliance is configured for multitenancy)

Automatically Power On VMs: Mark this box for Morpheus to handle the power state of VMs

Inventory Existing Instances: If marked, Morpheus will automatically onboard VMs from any KVM hosts associated with this Cloud

On the Group tab, create a new Group or associate this Cloud with an existing Group. Click NEXT

After reviewing your configuration, click COMPLETE

Onboard an Existing KVM Cluster¶

With the Cloud created, we can add existing KVM hosts from the Cloud detail page (Infrastructure > Clouds > Selected Cloud). From the Hosts tab, open the ADD HYPERVISOR menu and select “Brownfield Libvirt KVM Host”.

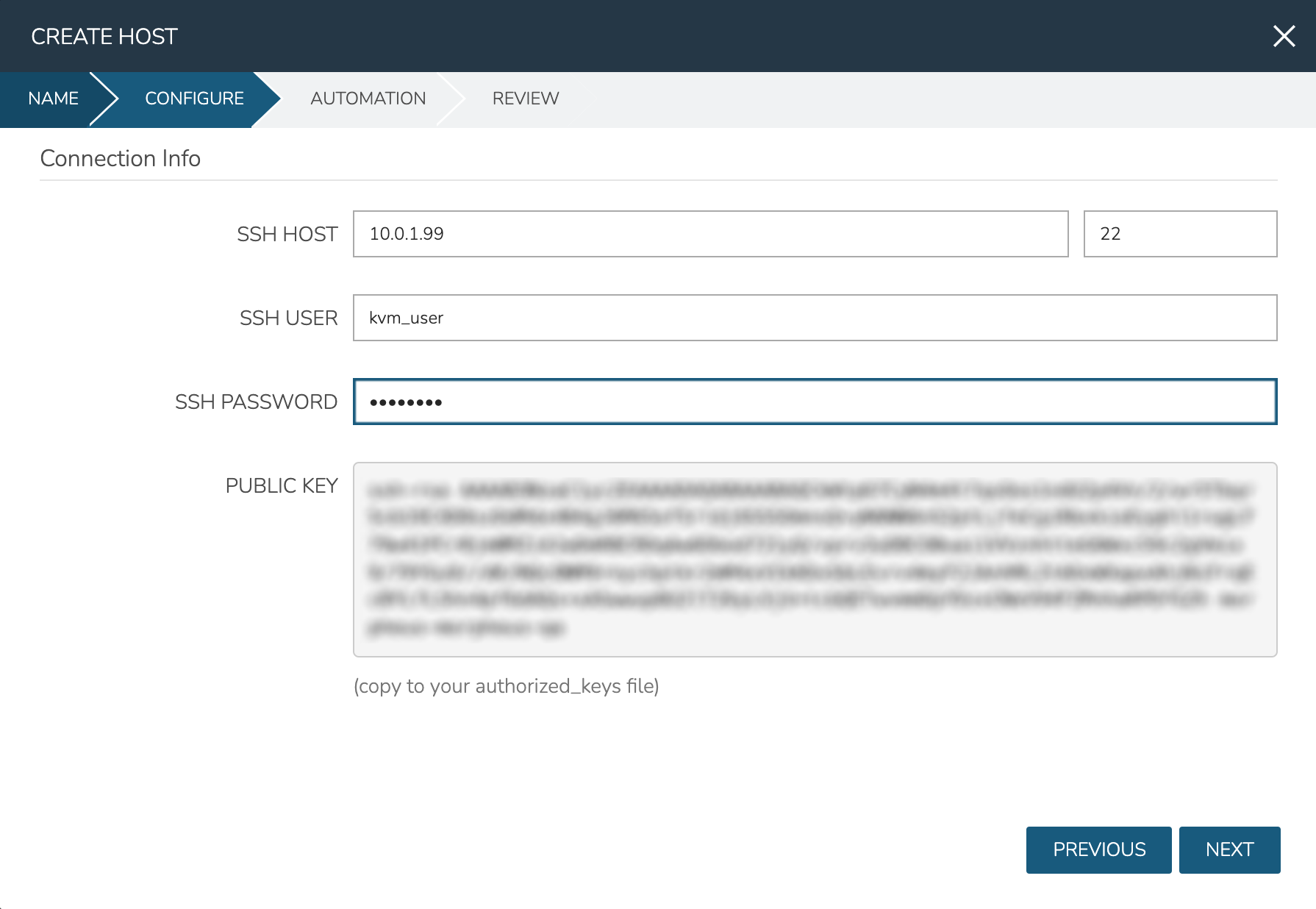

On the first page of the Create Host modal, enter a name for the onboarded KVM host in Morpheus. Click NEXT. On the following page, enter the IP address for your host in addition to an SSH username and password for the host box. It’s recommended that you also copy the revealed SSH public key into your authorized_hosts file. Click NEXT.

On the Automation tab, select any relevant automation workflows and complete the modal. The new KVM host will now be listed on the host tab along with any other KVM hosts (if any) you may have associated with this Cloud. If the Cloud is configured to automatically onboard existing instances, any VMs you may have running will be automatically discovered by Morpheus with each Cloud sync (approximately every five minutes by default). For VMs, you will see these listed on the VMs tab of the Cloud detail page and they will also be listed among all other VMs that Morpheus is aware of on the VMs list page at Infrastructure > Compute > Virtual Machines.

Create a KVM Cluster¶

Out of the box, Morpheus includes layouts for KVM clusters. The default layouts are Ubuntu or CentOS 7-based and include one host. Users can also create their own KVM cluster layouts either from scratch or by cloning a default KVM cluster layout and making changes. Custom cluster layouts can include multiple hosts, if desired. See Morpheus documentation on cluster layouts for more information.

When creating KVM clusters, you’ll need the Ubuntu or CentOS 7 box(es) standing but don’t need to worry about installing any additional packages on your own. Morpheus will handle that as part of the cluster creation. Complete the following steps to create a connection into your existing machines, provision a new KVM cluster, and associate it to the KVM Cloud created in an earlier section:

Navigate to Infrastructure > Clusters

Click + ADD CLUSTER and select KVM Cluster

Choose a Group on the Group tab

Select the Cloud created earlier from the Cloud dropdown menu, then provide at least a name for the cluster and a resource name on the Name tab

On the Configure tab, set the following options:

LAYOUT: Select a default KVM cluster layout or a custom layout

SSH HOSTS: Enter a name for this host and the machine address, click the plus (+) button at the end of the row to add additional hosts to the cluster

SSH PORT: The port for the SSH connection, typically 22

SSH USERNAME: The username for an administrator user on the host box(es)

SSH PASSWORD: The password for the account in the previous field

SSH KEY: Select a stored SSH keypair from the dropdown menu

LVM ENABLED: Mark if the destination box has LVM enabled

DATA DEVICE: If “LVM ENABLED” is marked, this field is available. The indicated logical volume will be added to the logical volume group if it doesn’t already exist

SOFTWARE RAID?: Mark to enable software RAID on the host box

NETWORK INTERFACE: Select the interface to use for the Open vSwitch Bridge

Click NEXT to finish configuration, then complete the modal after final review

After adding the new cluster, you will see your newly created hosts listed on the Host Tab of the KVM Cloud detail page (Infrastructure > Clouds > Selected KVM Cloud).

Provisioning to KVM¶

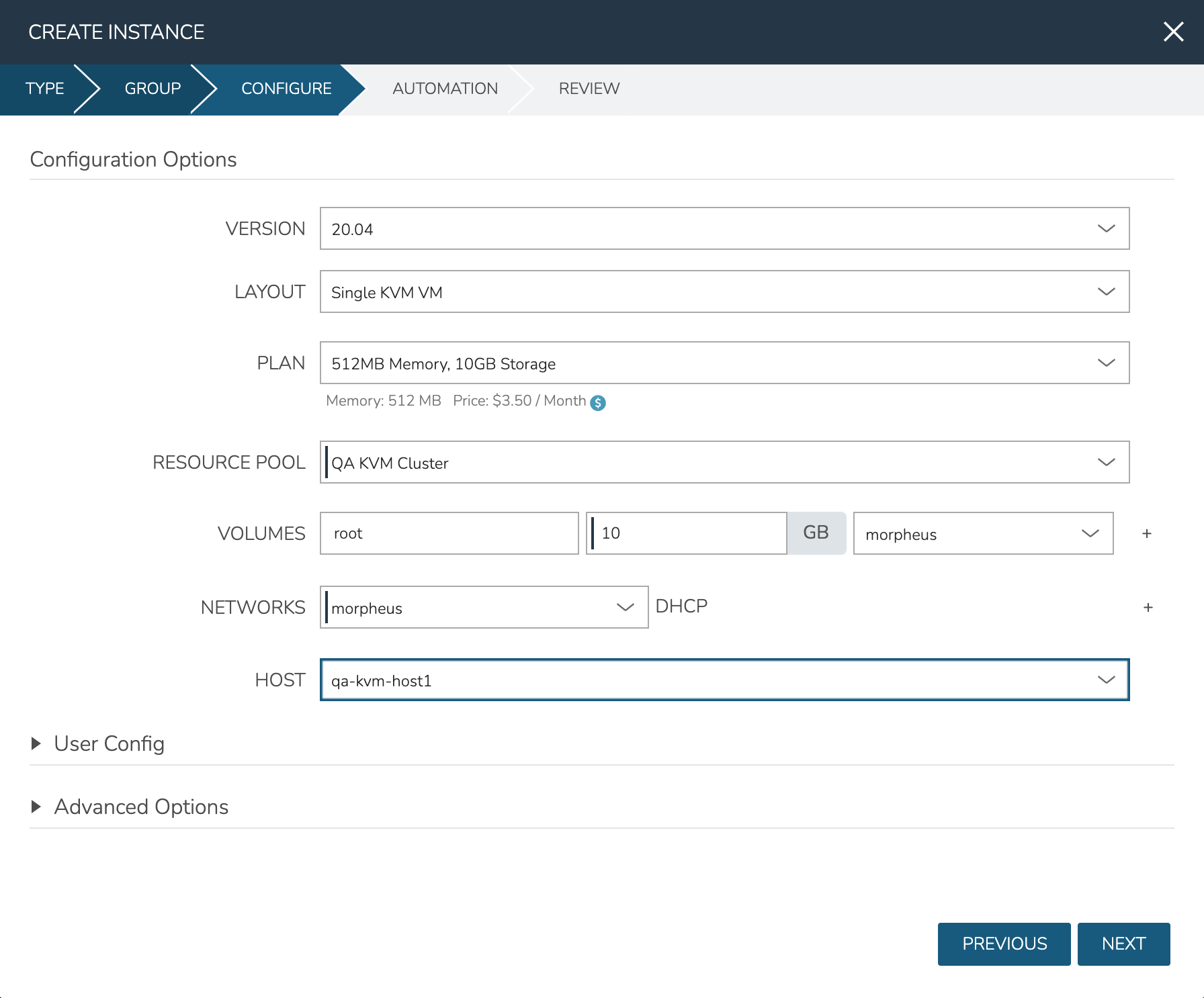

With the Cloud and hosts available, users can now provision to the KVM host using custom Instance Types and automation routines built in the Morpheus library. To provision a new Instance, navigate to Provisioning > Instances and click + ADD. Select the Instance Type to provision, and click NEXT. Choose a Group that the KVM Cloud lives in and select the Cloud. Provide a name for the new Instance if a naming policy doesn’t already give it a name under current configurations. Click NEXT to advance to the Configuration tab. The fields here will differ based on the Instance Type and Layout used but in the example case, selections have been made for:

Layout: Single KVM VM

Resource Pool: The selected KVM cluster

Volumes: Configure the needed volumes and the associated datastore for each

Networks: The KVM network the VM(s) should belong to

Host: The selected host the VM(s) should be provisioned onto

Complete the remaining steps to the provisioning wizard and the new KVM Instance will be created.

Adding VLANs to Morpheus KVM Hosts (CentOS)¶

Getting Started¶

This guide will go over how to configure VLANs on a Morpheus KVM Host. To get started, the first step is to go ahead and add the KVM host to morpheus and allow morpheus to configure it just like any other kvm host. When provisioning a manual kvm host be sure to enter the proper network interface name for the management network (not the trunk port). For example eno2 could be a management network while eno1 could be the trunk port network that the VLAN’s are going to be on as in this example.

Setting up a VLAN Interface¶

Before a VLAN can be used by KVM, an interface definition must first be configured for said vlan. In CentOS this is done by defining a network script in /etc/sysconfig/network-scripts.

Note

It is highly recommended that NM_CONTROLLED is set to NO or NetworkManager is disabled entirely as it tends to get in the way.

If our trunk network is called eno1 we need to make a new script for each VLAN ID we would like to bridge onto. In our example we are going to look at VLAN 211. To do this we need to make a new script called ifcfg-eno1.211 (note the VLAN Id is a suffix to the script name after a period as this is conventional and required).

TYPE=Ethernet

PROXY_METHOD=none

BROWSER_ONLY=no

BOOTPROTO=none

NAME=eno1.211

DEVICE=eno1.211

ONBOOT=yes

NM_CONTROLLED=no

VLAN=yes DEVICETYPE=ovs

OVS_BRIDGE=br211

There are a few important things to note about this script. Firstly there is a flag called VLAN=yes that enables the kernel tagging of the VLAN. Secondly we have defined an OVS_BRIDGE name. Morpheus utilizes openvswitch for its networking which is a very powerful tool used even by Openstack’s Neutron. It supports not just VLANs but VxLAN interfacing.

The OVS_BRIDGE name means we also need to define a bridge port script called br211 by making a script called ifcfg-br211:

DEVICE=br211

ONBOOT=yes

DEVICETYPE=ovs

TYPE=OVSBridge

NM_CONTROLLED=no

BOOTPROTO=none

HOTPLUG=no

These configurations will enable persistence on these interfaces so that a reboot of the host will retain connectivity to the bridges. Next up, the interfaces need to be brought online. This can be done by restarting all network services but if a typo is made networking could be stuck disabled and access over SSH could be broken. To do this by interface simply run:

ifup eno1.211

ifup br211

ovs-vsctl

add-br br211

Defining a LibVirt Network¶

Now that the bridge interface is defined properly for OVS, it must be defined in LibVirt so that Morpheus will detect the network and KVM can use it properly. By convention, these resource configurations are stored in /var/morpheus/kvm/config.

An XML definition must be created to properly define the network. In this case the network is named public 185.3.48.0.xml:

<network>

<name>public 185.3.48.0</name>

<forward mode="bridge"/>

<bridge name="br211"/>

<virtualport type="openvswitch"/>

</network>

This configuration defines the network name that will be synced into morpheus for selection as well as the type of interface being used (in this case a bridge to the br211 interface over openvswitch).

Now that this xml specification is defined it must be registered with libvirt via the virsh commands:

virsh net-define "public 185.3.48.0.xml"

virsh net-autostart "public 185.3.48.0"

virsh net-start "public 185.3.48.0"

Once this is completed, simply refresh the cloud in morpheus and wait for the network to sync into the networks list. Once the network is synced make sure the appropriate settings are applied to it within Morpheus. This includes setting the CIDR, Gateway, Nameservers and if using IP Address Management, the IPAM Pool.